- +40 723 996 387

- [email protected]

- Mon - Fri 9:00AM - 5:00PM

How To Understand Virtualization.

Virtualization is a technology that allows you to create multiple simulated environments or instances on a single physical computer or server. These simulated environments are often referred to as virtual machines (VMs) and can run their own operating systems and applications independently from each other, as if they were separate physical machines.

The primary goal of virtualization is to maximize the utilization of physical hardware resources by efficiently partitioning them to create multiple virtual environments.

How does virtualization work?

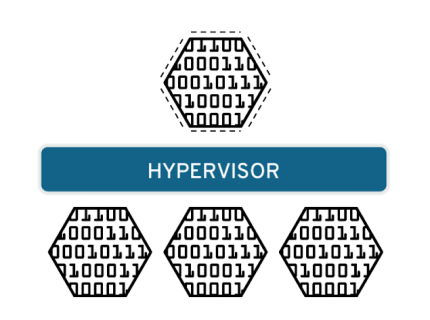

Software called hypervisors separate the physical resources from the virtual environments—the things that need those resources. Hypervisors can sit on top of an operating system (like on a laptop) or be installed directly onto hardware (like a server), which is how most enterprises virtualize. Hypervisors take your physical resources and divide them up so that virtual environments can use them.

Resources are partitioned as needed from the physical environment to the many virtual environments. Users interact with and run computations within the virtual environment (typically called a guest machine or virtual machine). The virtual machine functions as a single data file. And like any digital file, it can be moved from one computer to another, opened in either one, and be expected to work the same.

When the virtual environment is running and a user or program issues an instruction that requires additional resources from the physical environment, the hypervisor relays the request to the physical system and caches the changes—which all happens at close to native speed (particularly if the request is sent through an open source hypervisor based on KVM, the Kernel-based Virtual Machine).

What is a hypervisor?

A hypervisor is a program for creating and running virtual machines. Hypervisors have traditionally been split into two classes: type one, or “bare metal” hypervisors that run guest virtual machines directly on a system’s hardware, essentially behaving as an operating system. Type two, or “hosted” hypervisors behave more like traditional applications that can be started and stopped like a normal program. In modern systems, this split is less prevalent, particularly with systems like KVM. KVM, short for kernel-based virtual machine, is a part of the Linux kernel that can run virtual machines directly, although you can still use a system running KVM virtual machines as a normal computer itself.

What is a virtual machine?

A virtual machine is the emulated equivalent of a computer system that runs on top of another system. Virtual machines may have access to any number of resources: computing power, through hardware-assisted but limited access to the host machine’s CPU and memory; one or more physical or virtual disk devices for storage; a virtual or real network inferface; as well as any devices such as video cards, USB devices, or other hardware that are shared with the virtual machine. If the virtual machine is stored on a virtual disk, this is often referred to as a disk image. A disk image may contain the files for a virtual machine to boot, or, it can contain any other specific storage needs.What is the difference between a container and a virtual machine?

You may have heard of Linux containers, which are conceptually similar to virtual machines, but function somewhat differently. While both containers and virtual machines allow for running applications in an isolated environment, allowing you to stack many onto the same machine as if they are separate computers, containers are not full, independent machines. A container is actually just an isolated process that shared the same Linux kernel as the host operating system, as well as the libraries and other files needed for the execution of the program running inside of the container, often with a network interface such that the container can be exposed to the world in the same way as a virtual machine. Typically, containers are designed to run a single program, as opposed to emulating a full multi-purpose server.

Types of virtualization.

Data virtualization

Data that’s spread all over can be consolidated into a single source. Data virtualization allows companies to treat data as a dynamic supply—providing processing capabilities that can bring together data from multiple sources, easily accommodate new data sources, and transform data according to user needs. Data virtualization tools sit in front of multiple data sources and allows them to be treated as single source, delivering the needed data—in the required form—at the right time to any application or user.

Desktop virtualization

Easily confused with operating system virtualization—which allows you to deploy multiple operating systems on a single machine—desktop virtualization allows a central administrator (or automated administration tool) to deploy simulated desktop environments to hundreds of physical machines at once. Unlike traditional desktop environments that are physically installed, configured, and updated on each machine, desktop virtualization allows admins to perform mass configurations, updates, and security checks on all virtual desktops.

Server virtualization

Servers are computers designed to process a high volume of specific tasks really well so other computers—like laptops and desktops—can do a variety of other tasks. Virtualizing a server lets it to do more of those specific functions and involves partitioning it so that the components can be used to serve multiple functions.

Operating system virtualization

Operating system virtualization happens at the kernel—the central task managers of operating systems. It’s a useful way to run Linux and Windows environments side-by-side. Enterprises can also push virtual operating systems to computers, which:

Reduces bulk hardware costs, since the computers don’t require such high out-of-the-box capabilities.

Increases security, since all virtual instances can be monitored and isolated.

Limits time spent on IT services like software updates.

Network functions virtualization

Network functions virtualization (NFV) separates a network’s key functions (like directory services, file sharing, and IP configuration) so they can be distributed among environments. Once software functions are independent of the physical machines they once lived on, specific functions can be packaged together into a new network and assigned to an environment. Virtualizing networks reduces the number of physical components—like switches, routers, servers, cables, and hubs—that are needed to create multiple, independent networks, and it’s particularly popular in the telecommunications industry.

At NexonHost, we believe that everyone deserves to have their services and applications be fast, secure, and always available.

- +40 723 996 387

- [email protected]

- Mon-Fri 9:00AM - 5:00PM

- str B.P.Hasdeu Nr.60, Lipova – AR, Romania

Follow us

Dedicated Servers

Compare us with Competitors

Quick Links

Newsletter

Be the first who gets our daily news and promotions directly on your email.

Copyright © 2025 . All Rights Reserved To NexonHost.